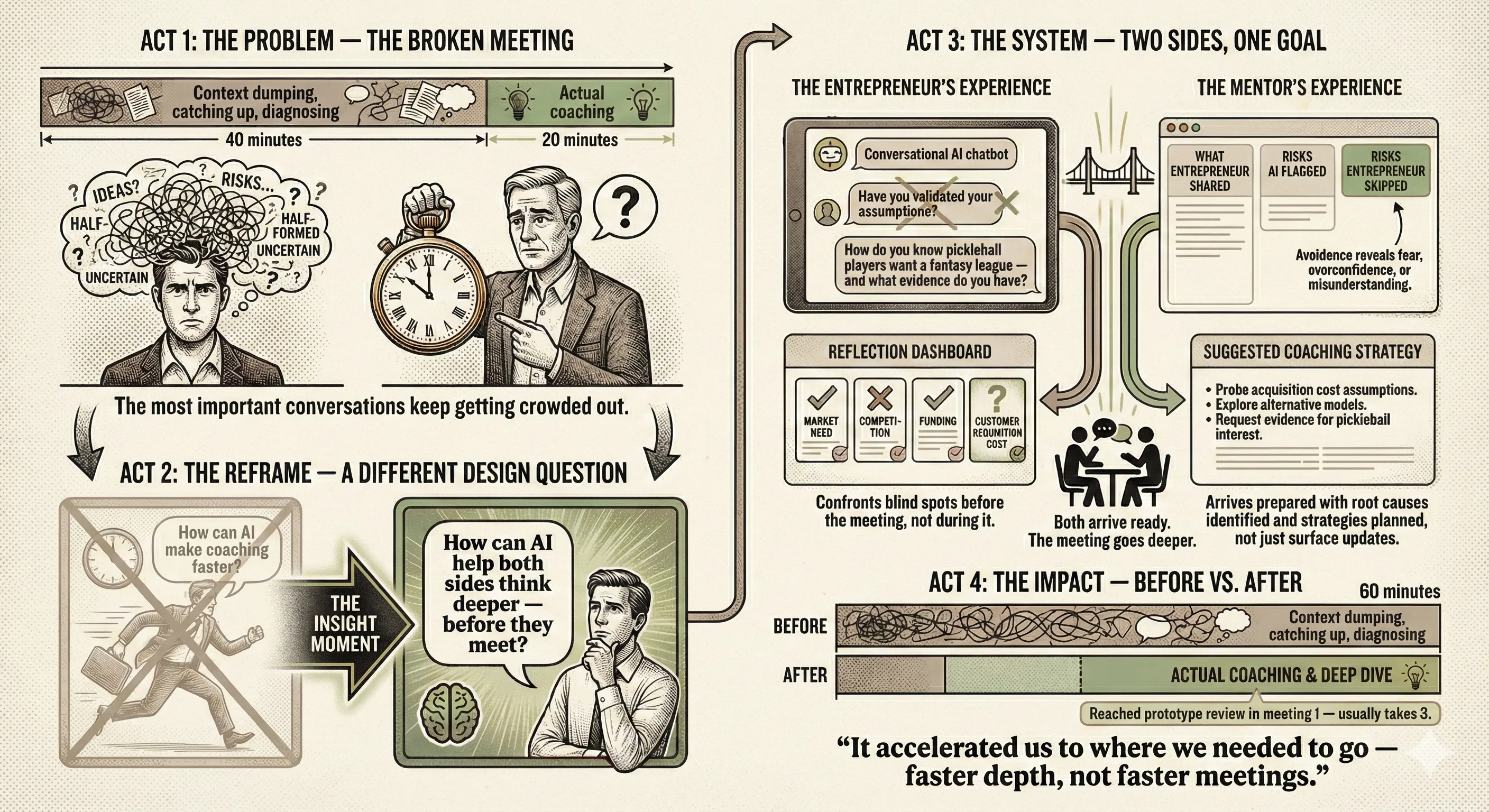

Imagine a first-time founder walking into her weekly mentor meeting with a scattered update, twenty minutes of context-setting, and five minutes left to tackle the real problem. Meanwhile, her mentor is doing rapid-fire diagnosis on the fly, listening, analyzing, and strategizing all at once, with no time to pause and think. This is the norm in entrepreneurship coaching. It’s exhausting, and it leaves the most important conversations on the table.

I set out to redesign this dynamic.

The insight that changed my approach: Early on, I assumed the goal was efficiency: shorter meetings, faster updates, less friction. What I discovered through interviews and prototype testing with mentors and entrepreneurs was different. The problem wasn’t the meeting itself. It was that neither person had done the hard cognitive work before walking in the door.

That realization led to a different design question: not “how can AI make coaching faster?” but “how can AI help both sides think deeper before they meet?” What I built: Using a Research through Design methodology, I went through five rounds of iterative design and testing before building a human-AI coaching system grounded in a cognitive model of expert mentoring practice. The system has two sides. For entrepreneurs, it’s a conversational AI that asks adaptive, diagnostic questions before each meeting, not generic prompts like a business model canvas, but context-specific questions tailored to their actual project and the specific risks they may be avoiding. When a founder was building a fantasy sports app for pickleball players, the system didn’t ask “have you validated your assumptions?”, it asked “how do you know pickleball players want a fantasy league, and what evidence do you have?”

For mentors, the system surfaces a rich dashboard: what the entrepreneur shared, what risks the AI diagnosed, and crucially, what the entrepreneur chose not to prioritize — a window into fear, overconfidence, and avoidance that normally stays invisible. The system then suggests tailored coaching strategies, and mentors can edit the underlying risk model directly in natural language when new patterns emerge.

What happened in deployment: I deployed the system with one mentor and eleven novice entrepreneurs across real coaching meetings at a university incubator. The meetings didn’t get shorter — but they got fundamentally better. Both sides arrived prepared. Entrepreneurs had already confronted their blind spots. Mentors could skip basic diagnosis and go straight to root causes, including emotional ones. One mentor identified that a founder’s reluctance to test his idea stemmed from a previous experience of idea theft — and navigated that conversation with intention rather than stumbling into it.

One entrepreneur described it as being “called out, but in a good way.” Another said it helped them go “a layer deeper” than any template had before.

The bigger argument: This project challenges a widespread assumption in AI design that value equals speed. In domains that require judgment, reflection, and trust, the opportunity is different: AI that slows you down in the right moments, scaffolds thinking rather than replacing it, and amplifies what humans can do together. That principle applies well beyond entrepreneurship, to teaching, research advising, clinical supervision, and anywhere that human development is the goal.

Collaborators for this project: Elizabeth Gerber, PhD; Matthew Easterday, Phd; Brylan Donaldson; Mike Raab

Cite this work: AI That Helps Us Help Each Other: A Proactive System for Scaffolding Mentor-Novice Collaboration in Entrepreneurship Coaching Evey Huang, Matthew Easterday, Elizabeth Gerber. 2025. Proc. ACM Hum.-Comput. Interact., Vol. 9, №7, Article CSCW368 (November 2025) https://doi.org/10.1145/3757549